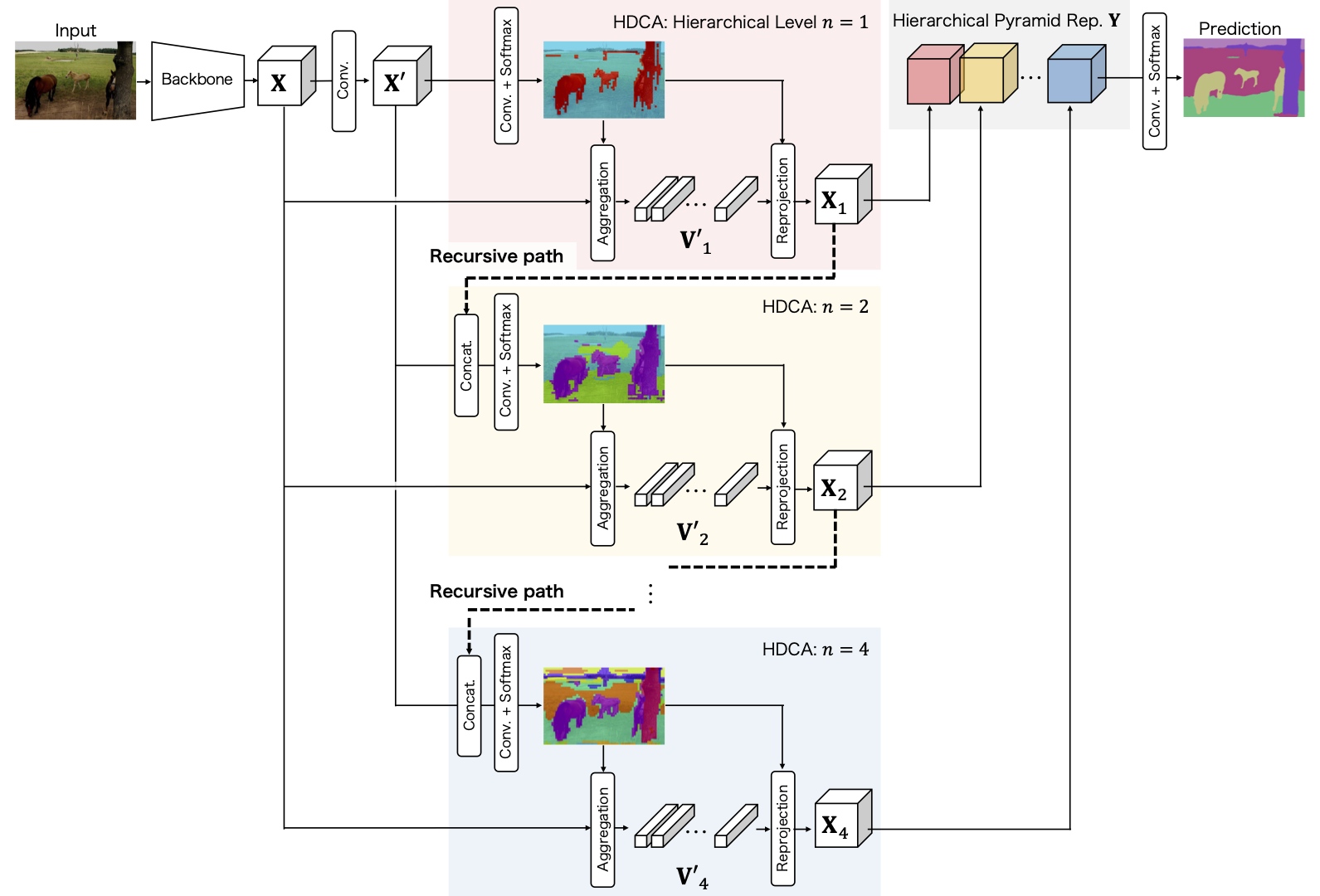

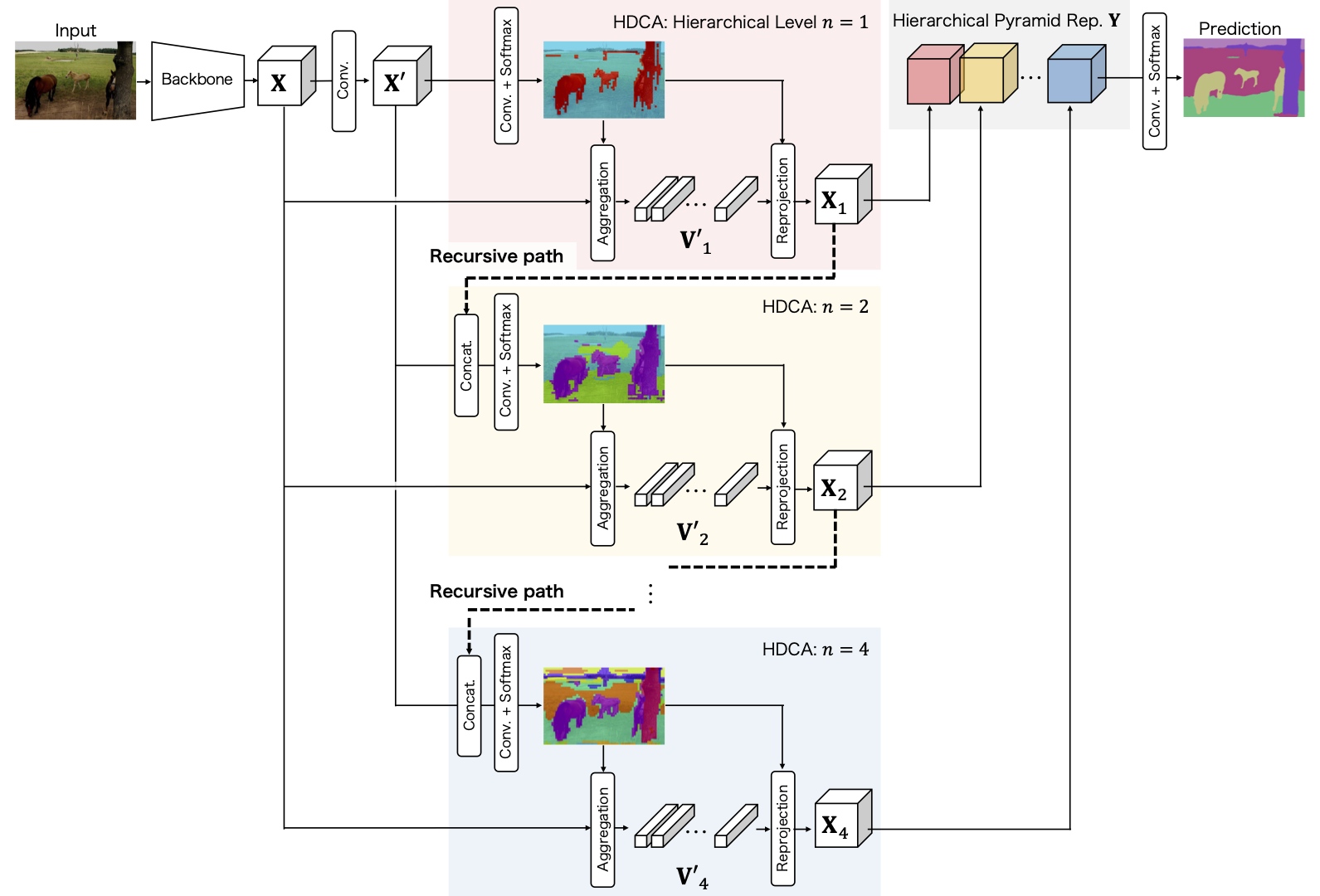

Understanding the context of complex and cluttered scenes is a challenging problem for semantic segmentation. However, it is difficult to model the context without prior and additional supervision because the scene's factors, such as the scale, shape, and appearance of objects, vary considerably in these scenes. To solve this, we propose to learn the structures of objects and the hierarchy among objects because context is based on these intrinsic properties. In this study, we design novel hierarchical, contextual, and multiscale pyramidal representations to capture the properties from an input image. Our key idea is the recursive segmentation in different hierarchical regions based on a predefined number of regions and the aggregation of the context in these regions. The aggregated contexts are used to predict the contextual relationship between the regions and partition the regions in the following hierarchical level. Finally, by constructing the pyramid representations from the recursively aggregated context, multiscale and hierarchical properties are attained. In the experiments, we confirmed that our proposed method achieves state-of-the-art performance in PASCAL Context.

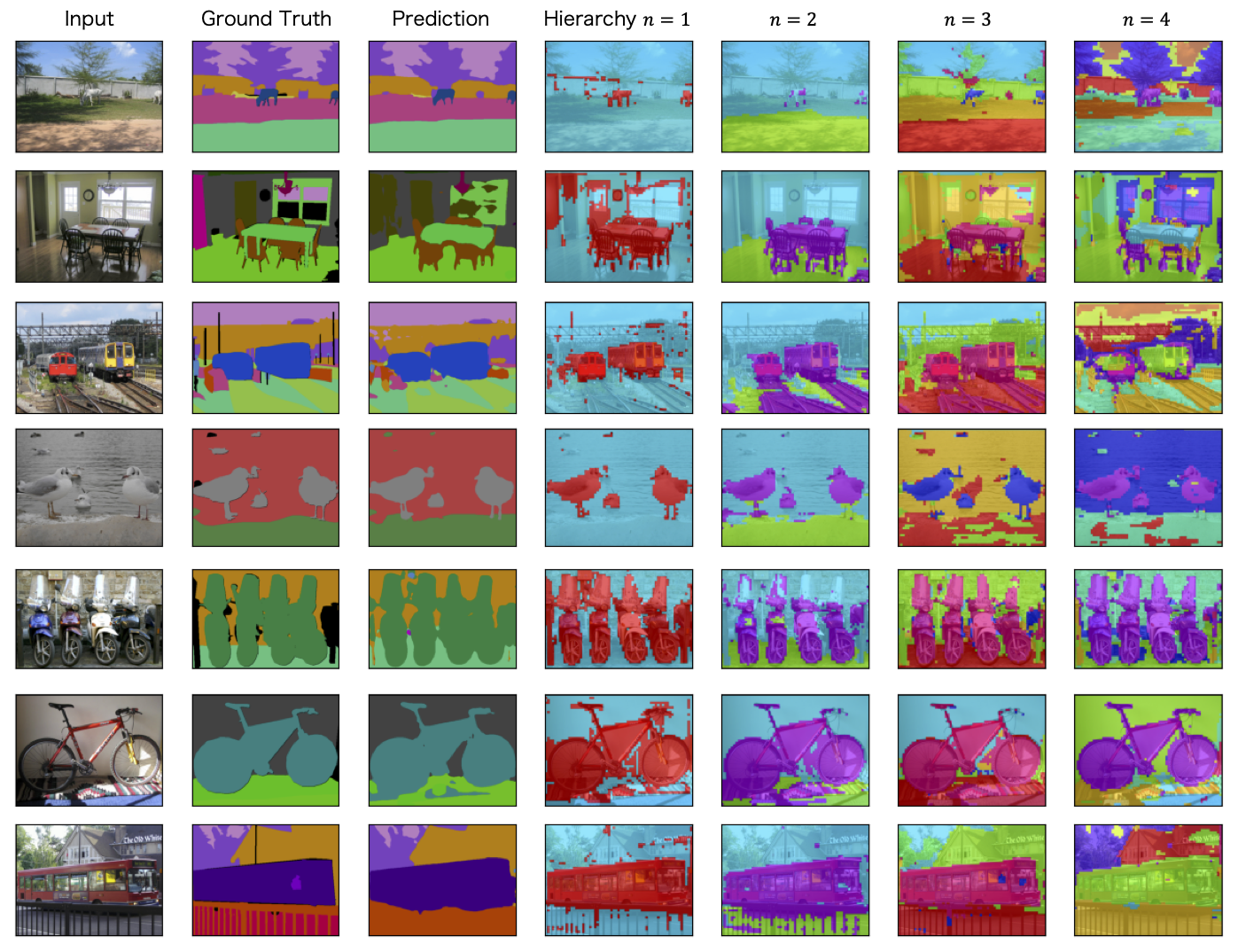

Semantic segmentation results and visualization of the hierarchical segmentation for context aggregation on PASCAL Context. These results are obtained from our proposed model. From left to right: input image, ground truth, final prediction, and segmentation results at each hierarchical level. Note that the color in the hierarchical segmentation is consistent across hierarchies but does not indicate semantics since these colors are determined from the indices of the regions. Please see our paper for the details.

This work is partially supported by Grants-in-aid for Promotion of Regional Industry-University-Government Collaboration from Cabinet Office, Japan.